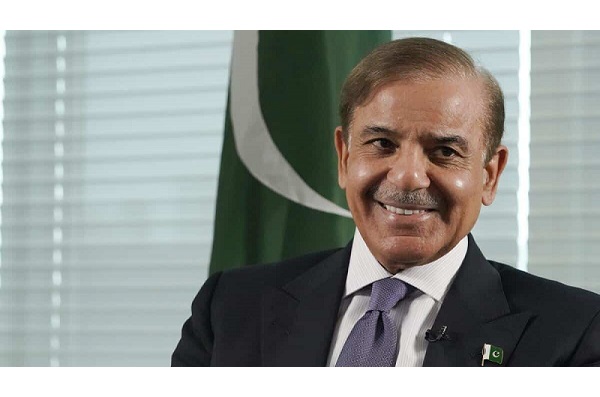

ISLAMABAD: Prime Minister Shehbaz Sharif would be visiting Saudi Arabia for the second time in a month, on April 27 for three days,

More than six million videos were removed from TikTok in Pakistan in three months, the app said on Wednesday, as it battles an on-off ban in the country.

Wildly popular among Pakistani youth, the Chinese-owned app has been shut down by authorities twice over “indecent” content, most recently in March after which the company pledged to moderate uploads.

“In the Pakistani market, TikTok removed 6,495,992 videos making it the second market to get the most videos removed after the USA, where 8,540,088 videos were removed,” TikTok Pakistan's latest transparency report said on Wednesday, covering January to March.

Around 15 per cent of the removed videos were “adult nudity and sexual activities”.

A spokesman said the Pakistan-made videos were banned as a result of both user and government requests.

Earlier this month, small anti-TikTok rallies were held against what protesters called the spreading of homosexual content.

“One can speculate that this is a result of government pressure or a reflection of the large volume of content produced in Pakistan given the popularity of the platform, or both,” said digital rights activist Nighat Dad.

“Social media platforms are more willing to remove and block content in Pakistan to evade complete bans,” she said.

It comes as the app faces a fresh court battle in Karachi, where a Sindh High Court (SHC) judge has asked the Pakistan Telecommunication Authority to suspend it for spreading “immoral content”. The platform is still working in Pakistan, however.

Freedom of speech advocates have long criticised the creeping government censorship and control of Pakistan's internet and media.

Dating apps have been blocked and last year, Pakistani regulators had asked YouTube to immediately block all videos they considered “objectionable” from being accessed in the country, a demand criticised by rights campaigners.

More than 7m accounts removed

TikTok also removed more than seven million accounts of users suspected of being under age 13 in the first three months of 2021, it said in a report.

The app said it took down nearly 62m videos in the first quarter for violating community standards — including for “hateful” content, nudity, harassment or safety for minors.

In its first disclosure on underage users, TikTok said it uses a variety of methods, including a safety moderation team, that monitors accounts where users are suspected of being untruthful about their age.

Those age 12 or younger are directed to “TikTok for Younger Users” in the United States.

TikTok's transparency report said that in addition to the suspected underage users, accounts from nearly four million users additionally were deleted for violating the app's guidelines.

You May Also Like

ISLAMABAD: The military has reportedly formed an inquiry committee to investigate allegations of misuse of authority against former

ISLAMABAD: Former prime minister Imran Khan took a potshot at the civil administration on Wednesday over the recent Bahawalnagar